Embeddings in 3D: how models turn words into coordinates

Last updated: April 18, 2026

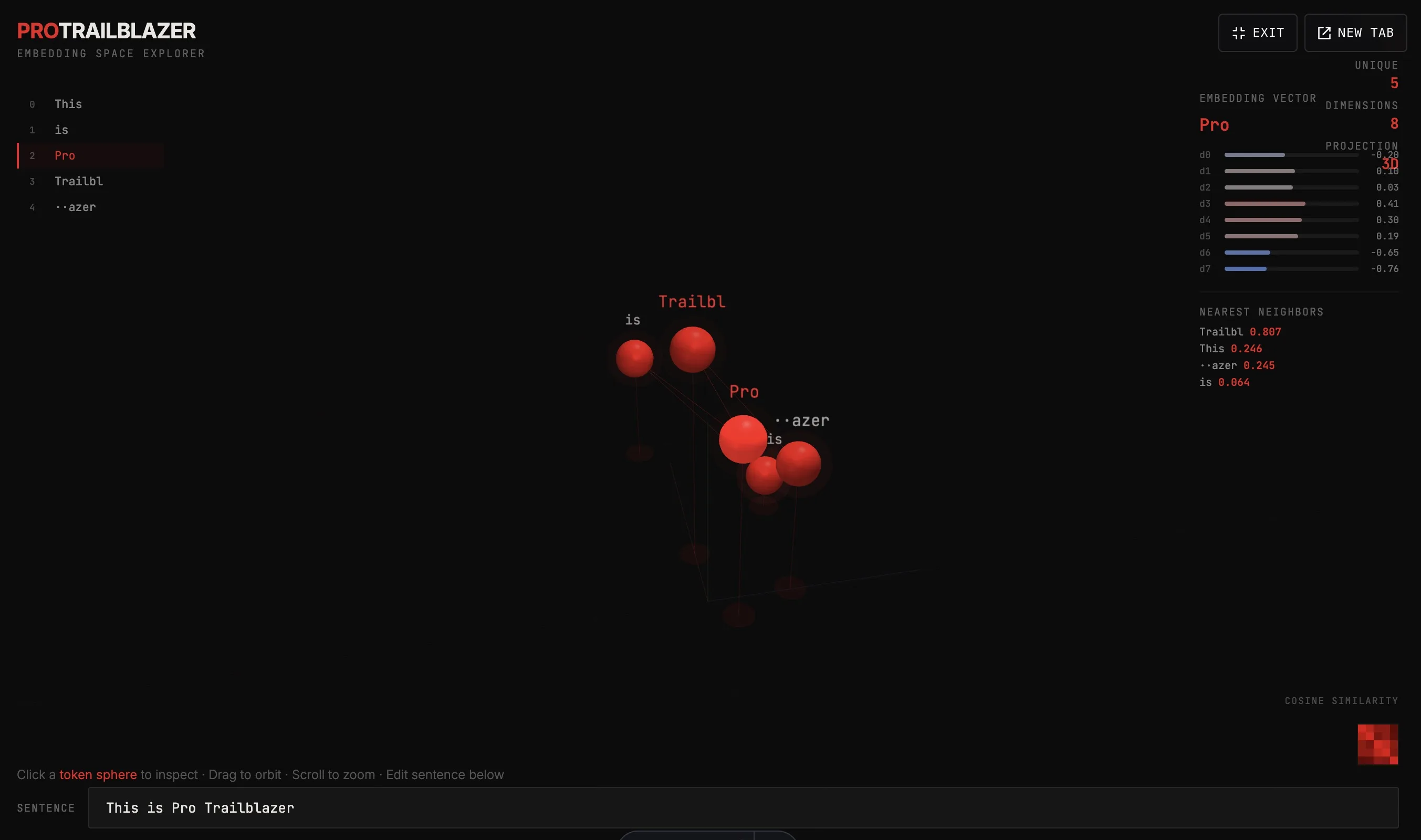

The interactive below tokenizes whatever sentence you give it, computes a synthetic 8-dimensional embedding for each token, and projects those vectors into 3D so you can walk around them. Click any token sphere (or its name in the left rail) to see the vector behind it and the four nearest neighbors by cosine similarity.

Demo

Embedding space explorer

Type a sentence, watch each token become a point in 3D space. Click any token to inspect its 8-dimensional vector and its nearest neighbors by cosine similarity.

What you're seeing

Each red sphere is a from your sentence. Its position in 3D is a compressed projection of an . The closer two spheres are, the more similar their vectors by cosine similarity, where 1.0 means identical direction, 0 means unrelated, and -1 means opposite.

A few things to try:

- Edit the sentence. Change a word, swap word order, add punctuation. Watch how the cluster shifts. Punctuation tokens drift apart from word tokens.

- Compare similar words. Try "cat dog wolf table". The animals tend to cluster, "table" sits further out.

- Click any token. The right panel shows its 8D vector with each dimension drawn as a horizontal bar. Bigger absolute values mean stronger signal in that dimension. The bottom of the panel lists the four nearest neighbors.

- Watch the heatmap. Bottom-right is a token-to-token cosine similarity matrix. Red squares mean similar tokens, dark squares mean unrelated.

How it actually works

This demo uses a deterministic toy embedding (token character codes hashed into 8 dimensions, with a few hand-coded rules for common stopwords and punctuation). Real models do something fundamentally similar but with two big differences:

- Dimension count. Production embeddings are typically 512 to 12,288 dimensions, not 8. The geometry is the same, just much higher-dimensional. We project down to 3D for visualization, the way you would project to 3D for plotting in TensorBoard or UMAP.

- Learned, not hand-coded. Real embeddings are learned during . The model adjusts the numbers token by token across billions of examples until tokens that appear in similar contexts end up near each other in space. No human ever decides "this dimension means animal-ness."

Why this matters: once words are coordinates, meaning becomes geometry. You can add and subtract vectors (the famous "king minus man plus woman lands near queen" property in well-trained spaces). You can find synonyms by nearest-neighbor search. You can compare entire sentences by averaging their token vectors. The whole field of vector search and retrieval-augmented generation rests on this idea.

Key takeaways

- Tokens are represented as points in a high-dimensional space.

- Similar meaning means spatially close. Cosine similarity is the standard distance measure.

- Real embeddings have hundreds to thousands of dimensions, not 8. We project to 3D only to look at them.

- Embeddings are learned during pretraining, not hand-coded. The model finds the structure on its own.