Mixture of Experts: sparse routing for huge models at fast-model cost

Last updated: April 23, 2026

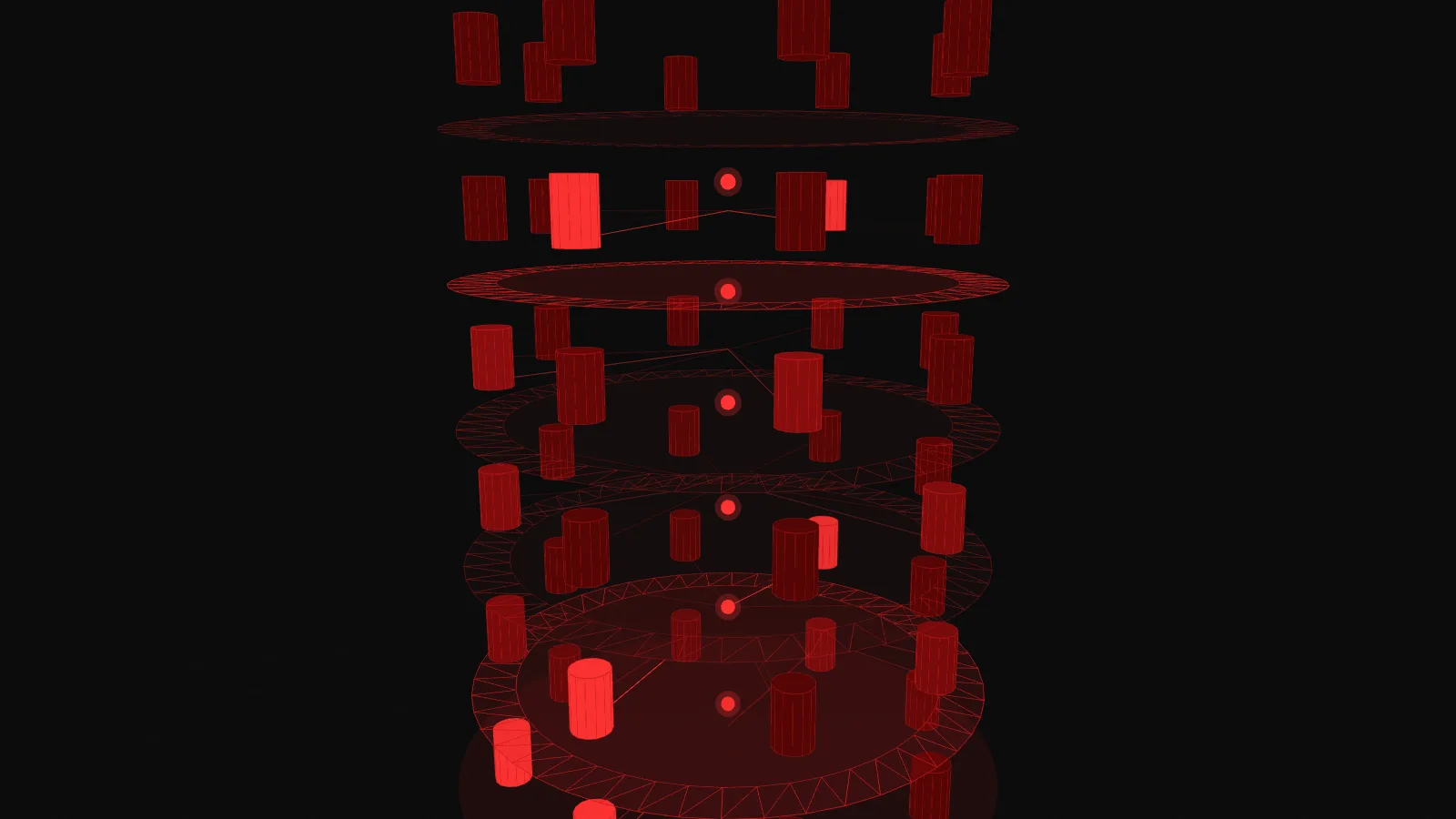

The interactive below shows a architecture routing tokens layer by layer. Switch modes to see a single token move slowly (SINGLE), a full prompt stagger through (SEQUENCE), or every token fire at once the way production inference really works (ALL AT ONCE). Try the three prompts and different expert counts to see how the lit-up path changes.

Demo

Mixture of Experts routing through a transformer stack

A 3D scene of six stacked MoE layers. Tokens rise from the input, and at each layer a gating network picks the top-k experts, lighting up the chosen path.

What you're seeing

Six layers of a transformer stack, rendered vertically. Each layer is a ring of cylindrical towers, one tower per expert. With the default 8 experts per layer that is 48 towers in the scene. The real model it represents would have one above each layer too, but the sim focuses only on the MoE feed-forward block, which is where the routing happens.

A glowing red particle rises through the center of the stack. When it reaches a layer, the router plane pulses, beams fan out from the token to every expert at brightness proportional to the gate score, and then the top-k beams brighten sharply while the rest fade. The chosen experts flash, then settle into a dim glow over two seconds as they hand off to the next layer. Thin lines left behind are the persistent trail, giving you a running picture of every expert the model has used so far.

Four stats in the top right: TOTAL_P is every parameter in every expert summed together (the number that gets put on model cards), ACTIVE_P is what the current token actually touches (a small fraction of the total), TOKEN is the current token being routed, and EXPERTS_HIT is the cumulative count of unique layer-expert pairs activated across the sequence so far. Watch ACTIVE_P stay small when TOTAL_P balloons.

A few things to try in the demo:

- Switch to the code prompt ("def fibonacci(n):"). The same two towers light up at each layer, again and again. That is (simulated) expert specialization: in this sim code tokens have learned to route to the "code experts" consistently.

- Switch to the translate prompt. English and French tokens light different expert groups as they alternate through the sequence. Punctuation routes to its own corner.

- Crank experts per layer to 64. The stack gets much wider but the lit-up path stays just as thin. TOTAL_P jumps dramatically. ACTIVE_P does not move. That is the MoE value proposition in one visual.

- Drop top-k to 1. Routing collapses toward deterministic (every token picks exactly one expert per layer). Raise it to 4 and coverage explodes: EXPERTS_HIT rises fast and the benefit of sparsity shrinks.

- Hit the TOP camera preset in ALL AT ONCE mode. You get a top-down view of one layer, with every token in the prompt lighting its chosen experts simultaneously. That is what a single step of real MoE inference looks like.

How it actually works

In a dense transformer, every token at every layer runs through the same feed-forward network. In an MoE transformer, that single feed-forward block is replaced by a bank of N smaller feed-forward blocks (the experts), plus a tiny neural network called the or router. The router takes the token's representation, produces a score for each expert, picks the top-k (typically 2 out of 8 or 64), and sends the token only through those. The outputs of the chosen experts are combined, weighted by their gate scores, and that becomes the layer output.

"Mixture of experts" is a misleading name. There is no pre-training where expert 3 learns French and expert 7 learns Python. Every expert starts identical. Specialization emerges during training because the router learns to route similar tokens to similar experts, and similar training data refines those experts further, and the whole thing reinforces itself. The result is that any specialization you see is emergent and often illegible. A real MoE does not have a "code expert," it has experts that sometimes process code tokens in subtle patterns that defy neat interpretation.

The sim shows crisp specialization on purpose, because crisp specialization is the teaching point. Real routing is noisier. Do not mistake the visualization for the model's internal structure.

Why does this architecture exist? Three reasons, in order of importance:

- Capacity at constant compute. A dense model with 100B parameters costs 100B-worth of compute per token. An MoE model with 100B total parameters but 10B active runs at 10B-worth. You get the expressive capacity of the big model with the throughput of a smaller one.

- Specialization (emergent). Different tokens can exercise different parameters. Whether or not specialization is "clean," the model has more slots to put distinct patterns, so empirically MoE models often match dense models that are several times larger in total params.

- Training parallelism. Experts can live on different GPUs ("expert parallelism"). This is a major reason MoE is practical at frontier scale. Routing becomes a network communication problem.

Why total parameters can mislead

When a model card says "141B parameters," the implied comparison is usually to dense models of similar total size. But for MoE, total parameters and inference cost are decoupled. Mixtral 8x22B has about 141B total params and around 39B active. Its inference cost is closer to a 39B dense model than a 141B one. The important metric is active parameters per token, and most MoE announcements bury that number.

There is one place total parameters do matter: GPU memory. Even inactive experts need to sit on a device somewhere, because any token might need them next. That means MoE models demand more VRAM than their active-parameter cost implies. A 141B-total MoE model loaded on a single GPU needs enough memory for all 141B parameters, not just the 39B it will use. This is why MoE deployments usually span many GPUs and use expert parallelism to spread the memory cost.

The other invisible cost is load balancing. If the router gets lazy and sends most tokens to the same three experts, training destabilizes: those three get overtrained while the rest stall. Every real MoE training recipe includes auxiliary losses that penalize imbalanced routing, plus a "capacity factor" cap that forces the router to spread tokens even when it would rather not. None of this is visible in the finished model. All of it matters to whether the finished model exists at all.

For more on the layer architecture MoE replaces, see . For related inference mechanics, the top-k and top-p sampling demo covers how the output side of an LLM works. The full glossary has the rest of the vocabulary.

Key takeaways

- "Mixture of experts" is misleading. Experts are not specialists trained on different domains. They are identical-sized sub-networks that the gating network learns to route to, and any resulting specialization is emergent, not designed.

- The total parameter count in an MoE model is mostly a marketing number. The figure that matters for inference cost is active parameters per token, which is a small fraction of the total.

- Load balancing is harder than routing. Real MoE systems use auxiliary losses and capacity factors to keep expert usage roughly uniform, or training falls over.

- buys capacity at constant compute but not at constant memory. All expert parameters still need to live somewhere. MoE models are memory-hungry even when they are fast.