Autoregressive generation: how an LLM writes one token at a time

Last updated: April 23, 2026

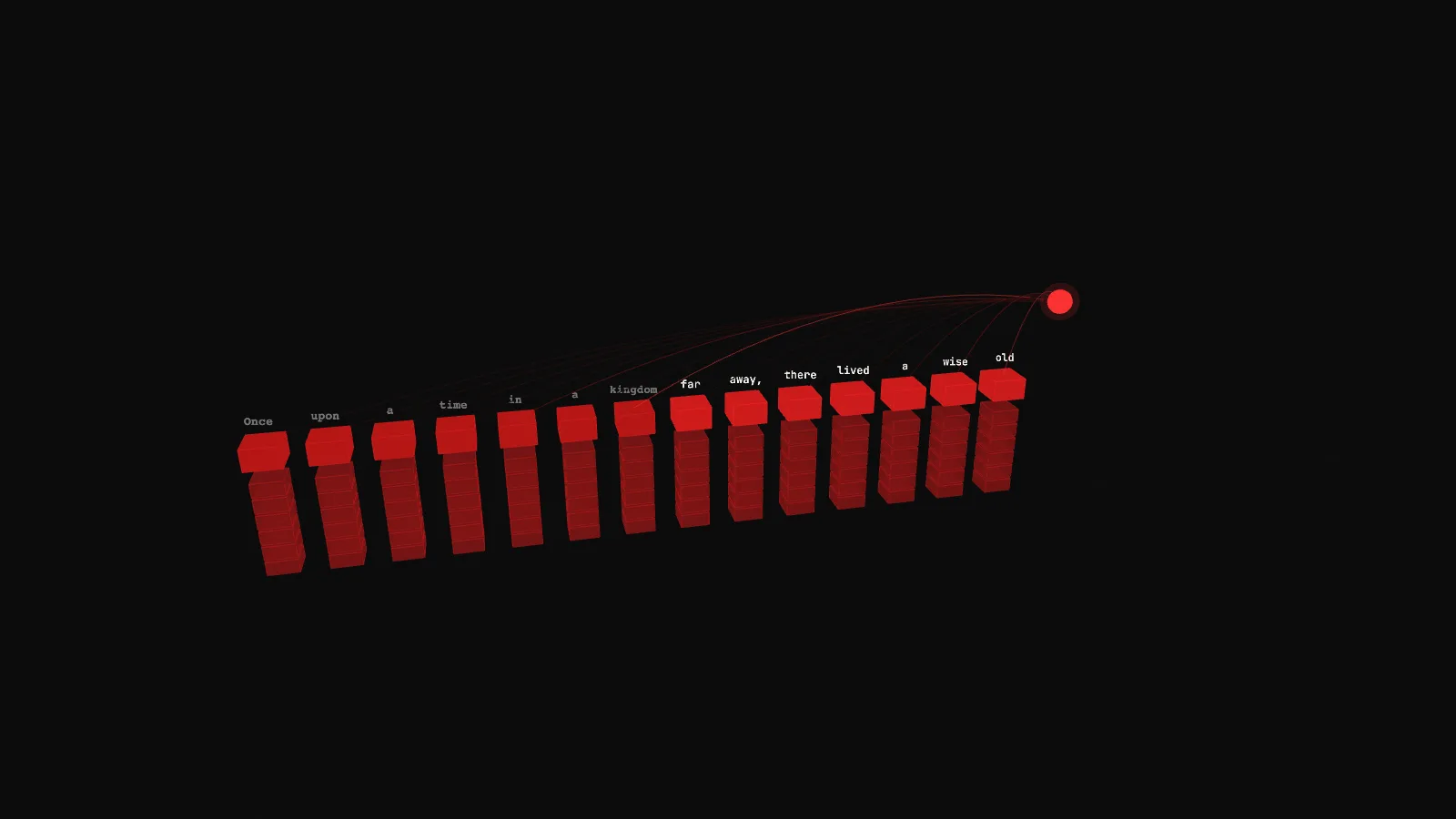

The interactive below walks the generation loop in 3D. A write head rises above the sequence, fans attention beams back to every prior token, and a new token drops in at the end. Try the three prompts, then switch from KV CACHE to NO CACHE and watch the COMPUTE stat go from linear to quadratic.

Demo

Autoregressive generation and the KV cache

A 3D scene of an LLM producing text one token at a time. Each new token attends back to every prior position, the KV cache grows, and NO CACHE mode shows what quadratic inference looks like.

What you're seeing

Tokens lay out along a horizontal axis as the sequence grows. Each red block is one , with its text floating above. Prompt tokens (the seven or eight the user typed) are dimmer. Generated tokens (everything after) are brighter. Below every token sits a short vertical stack of six smaller blocks: that is the for that position, one block per transformer layer.

The glowing sphere at the end of the sequence is the write head, the position being generated right now. At each step the head fans beams back through every prior token. Line brightness is the weight: stronger lines mean the model is paying more attention to that token for this step. When the step ends, the write head commits a new token into the slot, the KV cache for that position fills in, and the head shifts one slot right.

A few things to try in the demo:

- Let 20 tokens generate in KV CACHE mode, then flip to NO CACHE. Every prior cache block starts flashing red on each step: the model is recomputing the entire sequence. The COMPUTE stat jumps from about 120 to over 2400. That is what quadratic inference feels like.

- Use the TOP camera preset. Looking straight down makes the attention fan clearly visible as a 2D graph. Notice how each step the write head reads a different pattern of prior tokens.

- Switch to the code prompt. Attention patterns get tighter: local + a few long-range spikes back to earlier variable names and function definitions. Narrative prompts have broader long-range attention to story anchors like "kingdom".

- Crank the speed slider. At 4 tok/s the sequence fills fast. Watch the KV_CACHE stat climb linearly with SEQ_LEN (half an MB per token in this toy), while COMPUTE per step climbs only in KV CACHE mode, rapidly in NO CACHE.

How it actually works

An LLM does not generate text. It generates one token at a time. What looks like a finished sentence is a loop that ran N times, with each iteration depending on every iteration before it. That loop is autoregressive generation.

Each step goes like this. The model takes the full sequence so far (prompt plus whatever has been generated already), runs it through every , and produces a probability distribution over the vocabulary for the next position. A sampler picks one token from that distribution. That token gets appended to the sequence. The process repeats until a stop condition (an end-of-sequence token, a max length, or the user hits cancel). On a million-token output, the loop runs a million times.

Inside each step, the expensive operation is attention. For every position in the sequence, attention computes three vectors: a query, a key, and a value. The model then takes the dot product of the query at position T with every key at positions up to T (that is ), softmaxes those into attention weights, and uses those weights to pool the values. The result becomes the attention layer's output for position T.

Here is the key observation: when the model generates token T+1, the keys and values for positions 0 to T have not changed. They were computed once, back when those tokens entered the sequence. If you store them, you do not have to recompute them. That is what the KV cache does. It holds the K and V tensors for every layer, for every prior token, in GPU memory. On each new generation step, the model only computes K and V for the new token, appends them to the cache, and attends over the whole cache.

Without this cache, generating token T+1 would require recomputing K and V for tokens 0 through T, then attention over all of them. That makes each step O(T). Summed over N generation steps, total cost is O(N squared). At a 100-token output that is not a disaster. At a 100,000-token output, that quadratic factor is the difference between a real-time response and a machine that is never going to finish.

Why the KV cache is also the problem

Compute is not the main constraint on long contexts in production. Memory is. The KV cache has to live on the same GPU (or GPUs) running the model, and it grows linearly with sequence length.

Do the math for a frontier-scale model. Take a 32-layer model with a 4096-dimension hidden state, running at FP16 (2 bytes per number). For every token, the cache needs K and V at every layer. That is 32 layers times 4096 dims times 2 (K and V) times 2 bytes = 512 KB per token. A 100,000-token needs 50 GB of KV cache, on top of the model weights themselves. Most single GPUs do not have that much memory.

This is why every production serving stack is heavily optimized around KV-cache management. Techniques like paged attention (serve many requests from a shared pool of cache pages), grouped-query attention (fewer K/V heads than Q heads, shrinking the cache per layer), sliding-window attention (only keep the last W tokens), and quantized caches (store K and V at 4 or 8 bits instead of 16) all exist for the same reason: the KV cache is the main memory cost of long-context inference, and everyone is trying to make it smaller.

For related concepts, the top-k and top-p demo covers the sampling step (what happens to the distribution after attention), and the glossary has the full vocabulary for inference-side terminology.

Key takeaways

- An LLM does not generate text. It generates one token at a time. What looks like a finished sentence is a loop that ran N times, with each iteration depending on every iteration before it.

- The KV cache is not a cache in the usual "memoize a function" sense. It is the actual intermediate state of the attention mechanism (keys and values) for every prior token position, kept in GPU memory so they are not recomputed every step.

- Per-step attention cost is linear in sequence length. Total generation cost is the sum from 1 to N, which is quadratic. Long outputs get expensive because cost accumulates with sequence length, not because each step is expensive.

- Memory is the usual bottleneck for long contexts, not compute. A 100K-token context can consume tens of GB of KV cache on top of the model weights, which is why production stacks spend so much effort on paged attention, grouped-query attention, and quantized caches.